A/B Testing & CRO

Stop guessing

what converts.

Start proving it.

Full-cycle A/B testing for Shopify stores — research, design, development, and analysis. Every change backed by data. Every winner hard-coded into your store, every loser turned into the next hypothesis.

Coverage

What we test

Every part of your Shopify store that a visitor touches is a testing opportunity. Here's where we look first.

Product Pages

Hero layout, image gallery order, description copy, trust signals, CTA placement and copy — the highest-leverage page on any Shopify store.

Homepage & Hero

Above-the-fold messaging, hero imagery, value proposition clarity, and how quickly new visitors understand what you sell and why they should care.

Cart & Checkout

Cart drawer layout, upsell and cross-sell placement, trust signals at the critical decision point, and shipping threshold messaging.

Landing Pages

Paid traffic landing pages where small improvements compound quickly. Headline variants, social proof ordering, and offer framing tested against real ad traffic.

Collections & Navigation

Category structure, filter UX, sort order defaults, and menu labels — the paths visitors take to find products before they even reach a product page.

Site-Wide Elements

Announcement bars, sticky headers, exit-intent overlays, and global trust signals — elements that influence every session regardless of where it starts.

Selected work

Tests that moved the needle

Real stores, real traffic, results validated before going live.

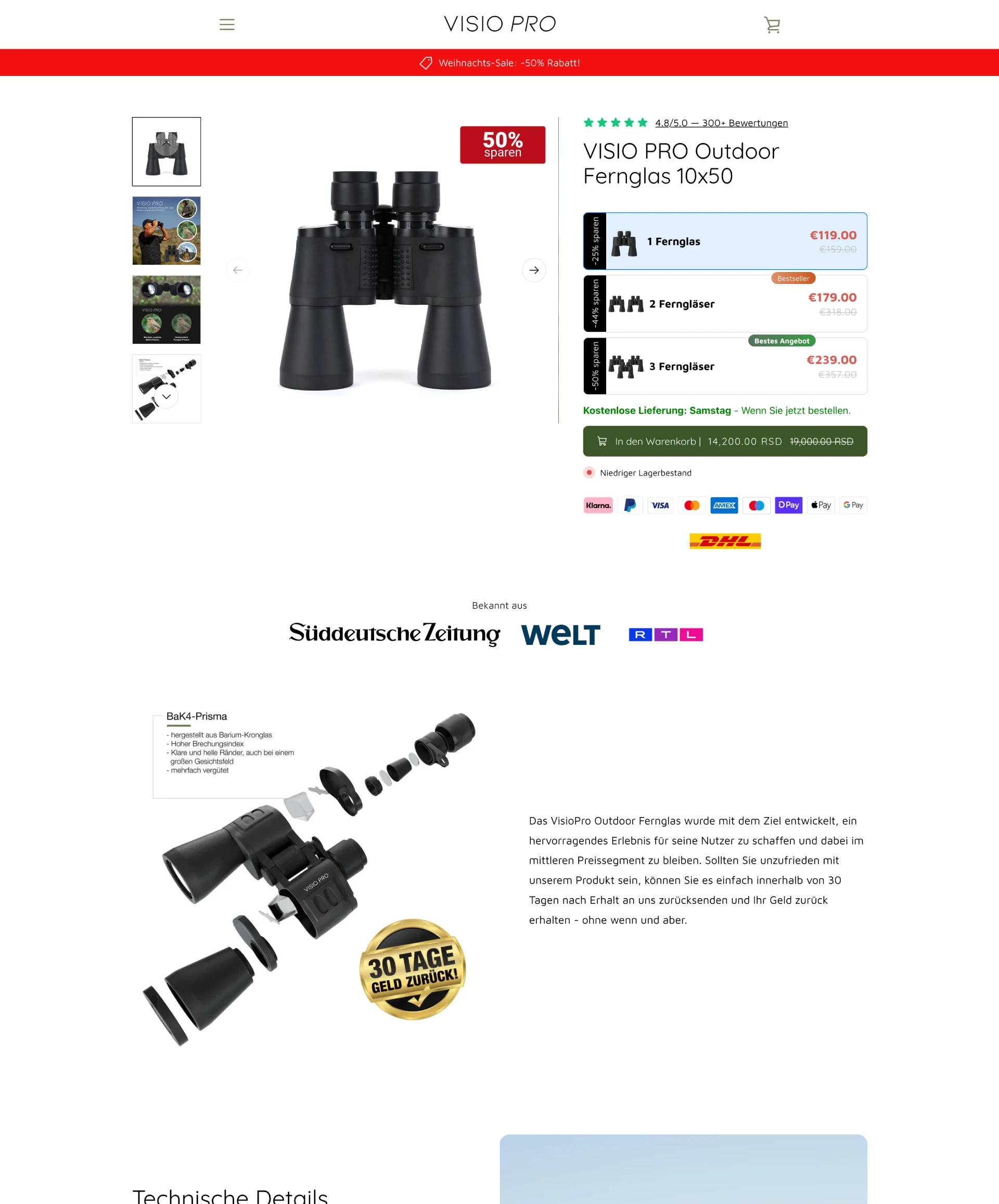

Visio Pro

Visio Pro, a German optics brand, were sending paid traffic to a default Shopify product page. We built a custom product page variant and A/B tested it against the original — isolating layout, trust signal placement, and gallery format as separate tests. The winning variant replaced the default across all paid campaigns.

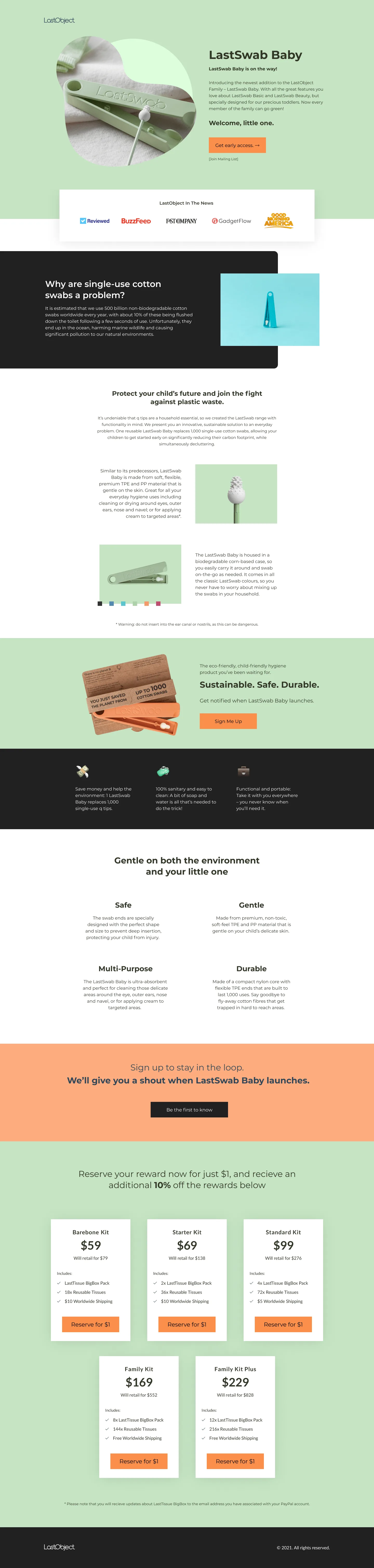

LastObject

LastObject's paid campaigns were sending traffic to a generic product page. We designed and built a dedicated landing page, then tested two variants — one leading with the sustainability angle, one leading with product utility. The winning variant was launched as the permanent campaign destination.

DNSFilter

DNSFilter needed to improve trial sign-up rates from their paid traffic. We audited the existing flow, identified the top friction points, and ran iterative tests on hero copy, social proof placement, and CTA design — taking the conversion rate from 2.36% to 23.44% over a series of validated tests.

Full-cycle delivery

Everything handled, end to end

No partial handoffs. Research through to winner deployment — every step covered.

CRO Audit & Research

Analytics deep-dive, heatmap analysis, session recordings, and funnel data — building an evidence base for every hypothesis before a line of code is written.

Hypothesis Development

Each test has a clearly stated hypothesis, a predicted outcome, and a success metric defined in advance — so results mean something and learnings carry forward.

Variant Design

Test variants designed in Figma — mobile-first, matching your existing brand, and with enough visual clarity to isolate what's actually being tested.

Shopify Development

Variants built directly in Shopify Liquid — no fragile third-party overlays. Tests run cleanly and winners are implemented as permanent code, not a permanent testing script.

QA & Launch

Cross-device and cross-browser QA before every test goes live. Daily monitoring during the run to catch any rendering issues before they skew results.

Results & Reporting

Statistical significance confirmed before any winner is called. Clear reporting on revenue impact — not just CVR — and documented learnings that feed the next test cycle.

How a testing programme runs

Audit & Research

Week one: analytics audit, heatmap review, session recordings, and a prioritised backlog of test ideas ranked by expected impact.

Design & Build

Hypothesis defined, variant designed in Figma, and built in Shopify. QA on staging before anything touches live traffic.

Launch & Monitor

Test goes live. Daily monitoring for rendering issues. Statistical significance reached before any result is called — no premature winners.

Deploy & Iterate

Winners hard-coded into your theme, testing scripts removed. Losers documented and their learnings fed directly into the next hypothesis.

Get started

Let's talk about your store

Tell me about your store, your traffic volume, and what you've already tried. I'll come back with an honest view on what's worth testing and what to expect.

Or schedule a call

Prefer to walk through your store on a call? Book a free 30 minutes and we can identify the highest-impact testing opportunities together.

Frequently Asked Questions

How much traffic do I need before A/B testing makes sense?

As a rough guide, you need enough traffic to reach statistical significance within a reasonable timeframe — typically 2–4 weeks per test. For most Shopify stores, that's around 500–1,000 sessions per week on the page being tested, though it depends on your baseline conversion rate and the size of the effect you're looking for. If you're below that threshold, we can focus on qualitative research and implementation first to grow traffic before running split tests.

How many tests can you run per month?

Typically 2–4 tests per month, depending on your traffic volume and test complexity. Running too many tests in parallel risks splitting traffic too thin and producing unreliable results — it's better to run fewer tests properly than many tests badly. The exact cadence is set based on your store's traffic after onboarding.

What tools do you use to run tests?

For most Shopify stores, tests are built directly in Shopify using theme variants and traffic splitting — this keeps performance clean and avoids the render-blocking scripts that most A/B testing platforms inject. For more complex setups, we can use Intelligems, Convert, or VWO depending on what your store already has in place. I'll recommend the right approach based on your specific situation on the strategy call.

What happens when a test loses?

A losing test isn't wasted — it's data. Every losing variant is documented with the hypothesis, what the data showed, and what that tells us about shopper behaviour on your store. Those learnings feed directly into the next round of hypotheses. Over time, losing tests often point more clearly to what to test next than winning ones do.

Do you handle the implementation of winning tests?

Yes. Winners are hard-coded into your live theme as permanent, clean Shopify code — and the testing script or variant code is removed. You don't end up with a store running on a patchwork of overlays. This also means your store stays fast: no permanently loaded testing scripts affecting page speed for every future visitor.

Can this be combined with a CRO or UX audit?

Yes, and it's usually the best place to start. An audit gives us a prioritised view of what's likely broken before we start testing — so the first tests are already focused on the highest-impact areas rather than guessing. We offer a standalone CRO Audit and a UX/UI Audit that pair well as a starting point for an ongoing testing programme.